SA

Sayash Kapoor

4/16/2026

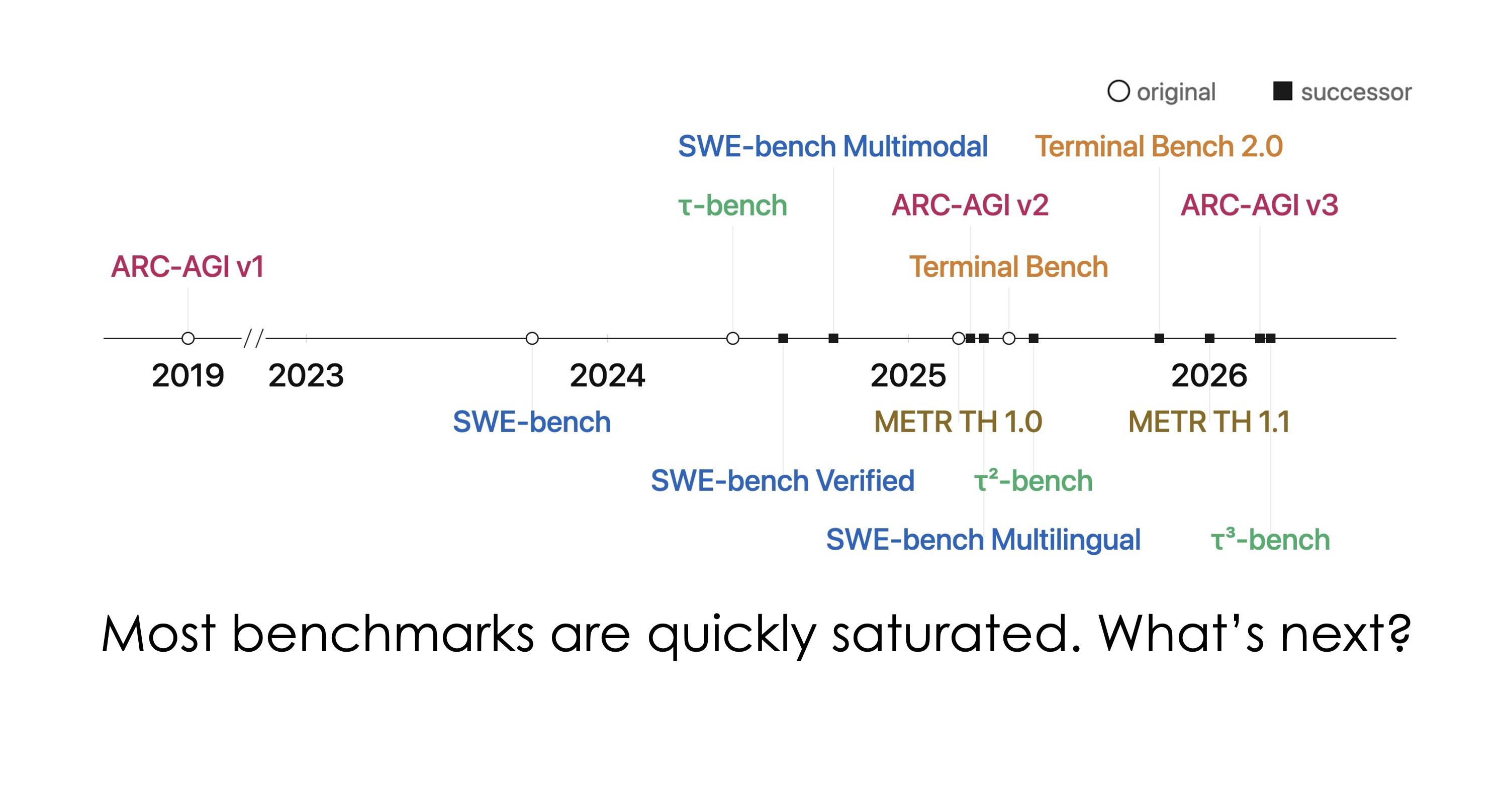

Open-world evaluations for measuring frontier AI capabilities

TL;DR

CRUX is a new evaluation project designed to measure frontier AI capabilities on long, complex open-world tasks, moving beyond traditional benchmarks. The approach targets real-world complexity rather than controlled test environments. Limited details provided in this announcement, though it reflects growing focus on practical AI evaluation methodologies.

- •CRUX announced as new AI evaluation framework

- •Targets long, messy real-world tasks

- •Minimal implementation details disclosed

Generated with AI, which can make mistakes.

Is this a good recommendation for you?